Zavala 4.2 is out. The big feature in this release is Outline Locking (like in Apple’s Notes). You can read more about it in the Zavala Help. zavala.vincode.io/help/Lock…

Blog

Zavala 4.1

Another Zavala release is here. This time there is a lot going on with Markdown capabilities.

Markdown Import

You can now directly import Markdown documents into Zavala. Markdown documents are very unstructured and Zavala is a very structured outliner, so this was something of a challenge. In the end it worked out rather well. Markdown headings become Zavala rows. Markdown paragraphs became Zavala notes paragraphs.

I’ve done a fair amount of testing this using open source project documents and Zavala has been able to parse them all. Sometimes there is page layout issues, but for the most part it just works.

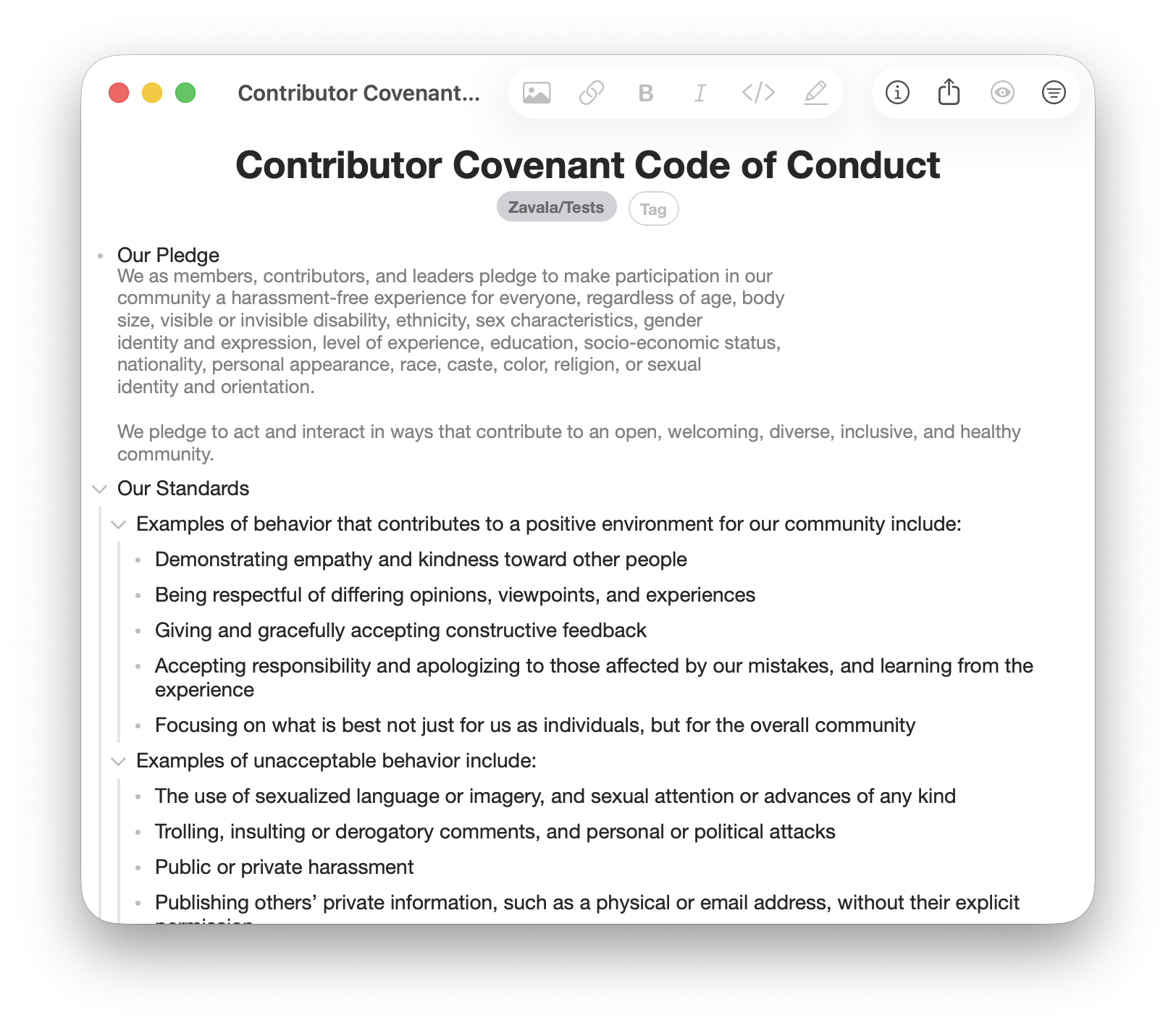

Here I’ve imported a standard CODE_OF_CONDUCT.md file. It can now be edited

and then exported again using Export Markdown Doc and it will be structurally

the same when it was imported (plus edits).

New Markdown Formatting Options

We’ve got a couple new formatting options for text. One is Code and the other is Highlight. More formatting options has been a popular request for Zavala. These are probably the final two that will get added, mostly because they don’t conflict with how Zavala works.

Zavala tries to stay as compatible with Markdown as possible. This means that underlining is not going to happen. Underlining in Markdown is reserved for links and there is no widely agreed upon way to extend Markdown to include underlines anyway.

The other main formatting choice left is strikethrough. Strikethrough is a softer no than underlining, but is still a no. It conflicts with how Zavala shows that a row has been completed. I really like using the strikethrough to show completed rows and have no plans on using checkboxes for that.

Shortcuts Menu

Another new feature coming in Zavala 4.1 is more of a power user feature. That’s why you have to enable it in the Settings to make it work. It is a menu that you can use to launch frequently used Shortcuts.

Zavala’s Shortcuts support is extensive and quite powerful. I use it to publish Zavala’s help web pages and my blog posts (including this one). One person uses a Shortcut create a daily template to track their migraine headaches. You can use Shortcuts for lots of things.

I don’t like having to launch the Shortcuts app and dig around to find the Shortcut I want to run. Now you can just add the name of the Shortcut you want to run to Zavala’s Shortcut Menu. When you activate the Shortcut from Zavala, Zavala will launch the Shortcuts application, run the Shortcut, and then return back to Zavala when you are done. Much nicer.

Big Release

In addition to those 3 changes we now export images automatically in a folder next to your exported Markdown or OPML files if you are exporting an outline with images. Lots of bug fixes went into this release too.

Now… on to working on Zavala 4.2.

Zavala & AI

Notemap

Notemap is a newer mind mapping application that I was recently made aware of. It looks pretty cool. Mind mapping tools always struck me as very close to an outliner in functionality. They both use hierarchical relationships (and links) to relate information with each other. Outliners are more text based and mind mappers are more graphical.

What really fascinated me was how hard the maker of Notemap had leaned into integrating AI into the application. You can create new mind maps or prompt it to add more nodes. Kind of cool, especially when you realize you can use the AI to give you a starting point for a mind map. You can then prune ideas you don’t like and add additional ones to it.

AI as a Partner

I think a lot of ink has been spilled about AI inaccuracies and hallucinations. I would never recommend using today’s AI’s to generate work wholesale in a way that isn’t thoroughly reviewed.

I recently began using Claude to code and it was a revelation. I work alone and don’t have a team of developers to bounce ideas off of. Claude became that for me. It helps me think about a problem from an angle I might not have before. I think AI’s make good sounding boards.

I do not trust them anymore than I trust most people. I always review the code written by Claude and either approve it or rewrite it (or make Claude rewrite it). I think this works pretty well and I can see how using an AI for writing outlines would be useful too.

Zavala and AI

I’m very hesitant to add trendy or shiny features to Zavala just to have them. The user interface can get cluttered and sometimes features don’t age well. We are currently using AI’s using a chat interface. That may not be the future of AI. This hasn’t been decided yet. Besides, I don’t want to over complicate Zavala by making users do things like decide which AI is best or have to enter API keys to access them.

For now, I’m going to sit back and let this AI revolution play out some more before adjusting Zavala to the new state of things. But I do see how AI could be useful today to some users. For example, to get them started on an outline if they are having trouble getting going.

Shortcuts to the Rescue

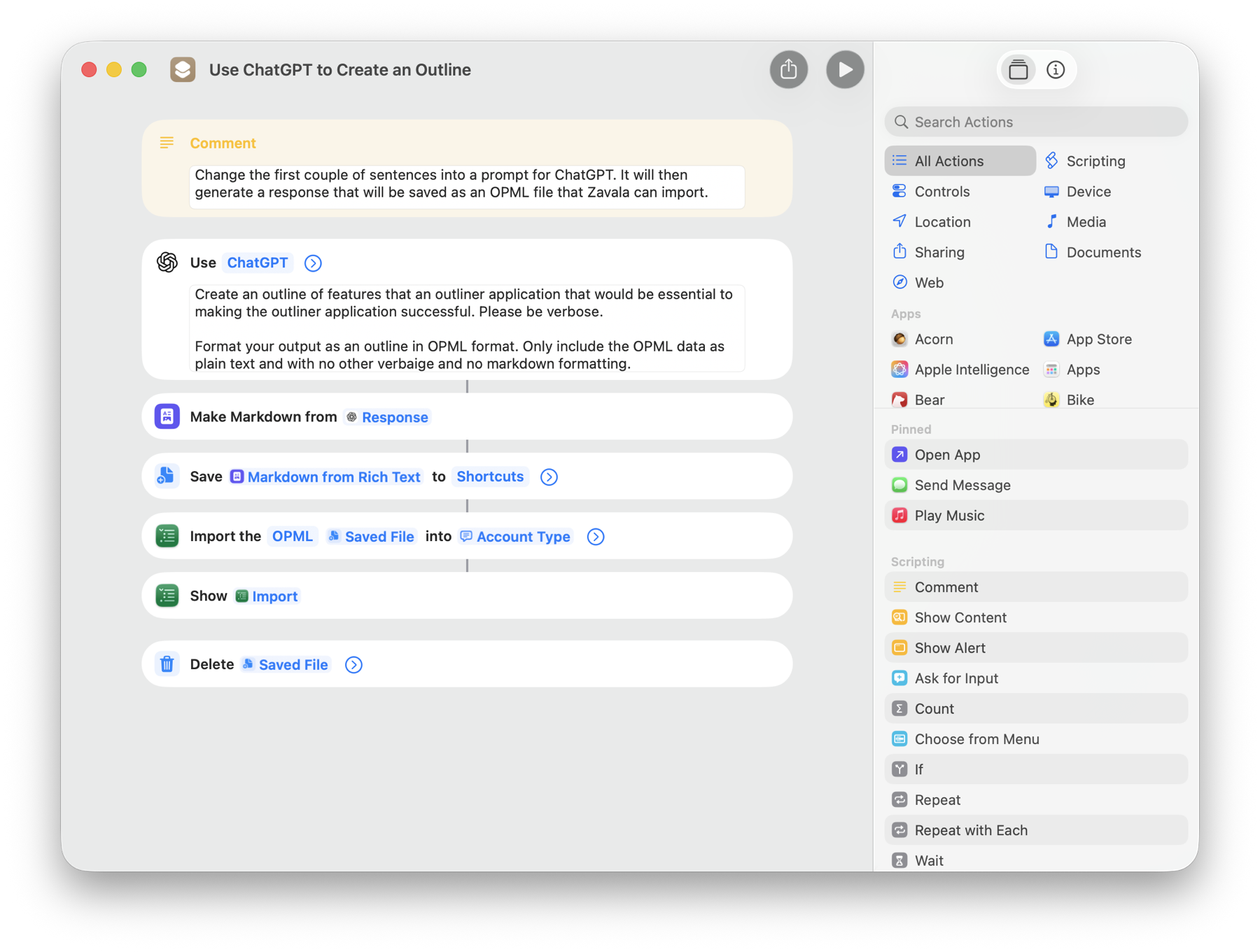

Zavala has great Shortcuts support and has for some time. With macOS 26 and iOS 26, Apple added support for utilizing AI models in Shortcuts. You can use these two things together to create outlines using AI. I wrote an example Shortcut, Use ChatGPT to Create an Outline, that does what it says. It even works in older versions of Zavala, you just have to have the most recent Apple operating systems.

Just modify the first sentence in the ChatGPT box to a prompt you come up with and run it! You’ll get a ChatGPT generated outline in Zavala, ready to edit.

Just modify the first sentence in the ChatGPT box to a prompt you come up with and run it! You’ll get a ChatGPT generated outline in Zavala, ready to edit.

Bespoke Personal Software

Anthology

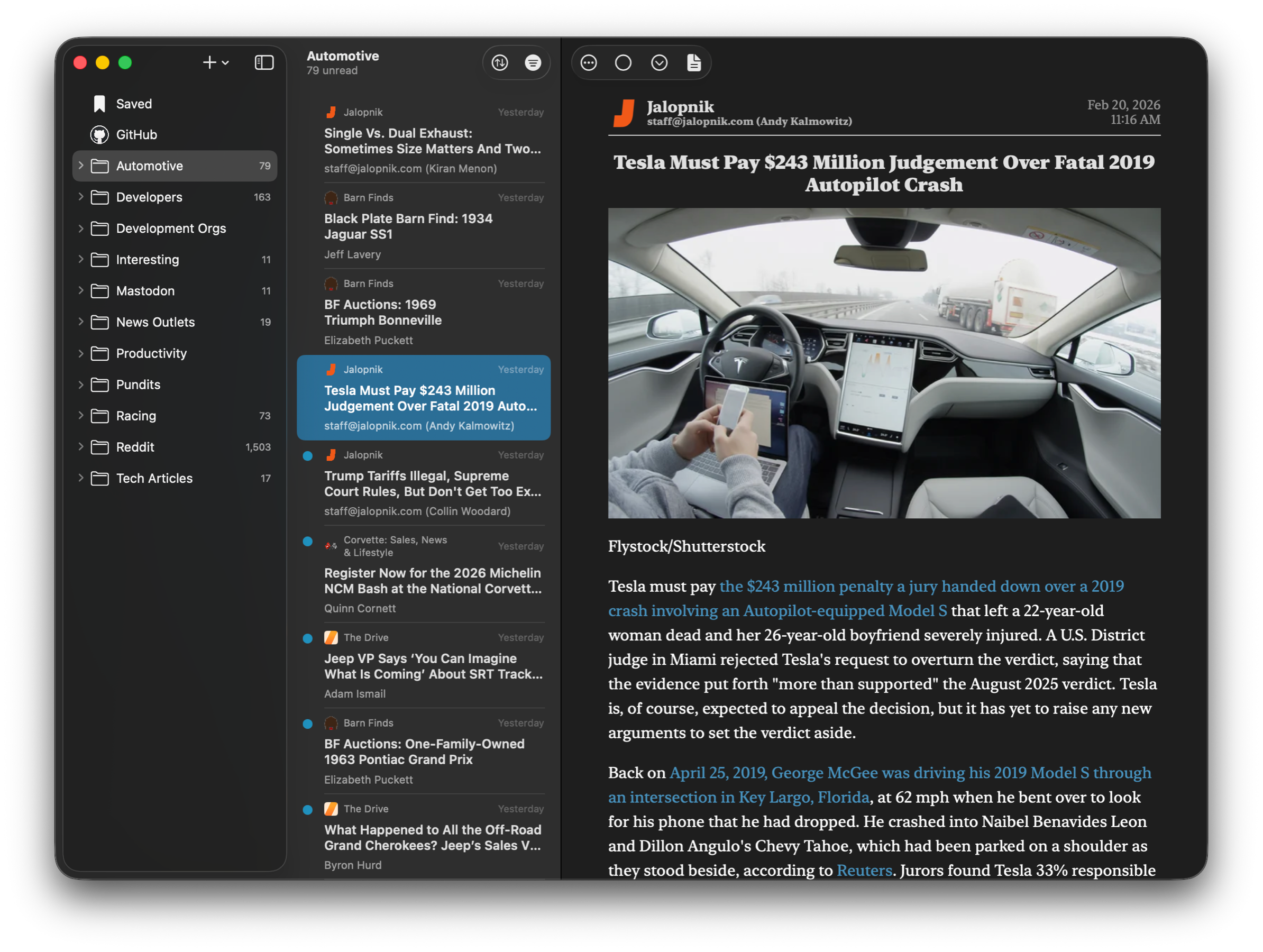

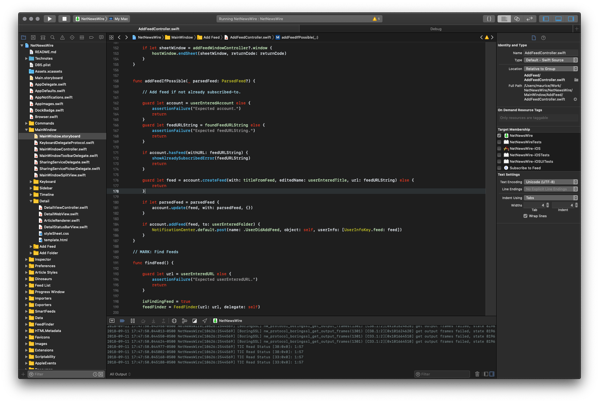

So… I wrote an RSS Reader. Why not? Everyone else seems to be doing it lately. I actually did it for the same reasons that I see other’s had written theirs. There wasn’t an RSS Reader out there that fit me perfectly. In my case I wanted something like Tapestry with its plugin connectors. What I didn’t want was the almost infinite timeline. I wanted a traditional 3 column RSS Reader like the OG of the group NetNewsWire. So I wrote Anthology.

You Can’t Have It

I think Anthology is pretty cool. It is a SwiftUI and SwiftData app that uses almost no AppKit or UIKit code. It runs great on macOS and iOS. The user interface is very similar to NetNewsWire but it uses Tapestry’s Open Source plugin connectors to provide access to RSS feeds, Mastodon, Reddit, and more. It has iCloud syncing.

I think it is a pretty strong contender or at least has a lot of potential. I have no plans on distributing it though. Not for sale and not Open Source. Frankly, I don’t want to deal with the hassle. Besides, the field is saturated. The last thing this world needs is another RSS Reader.

Built in Four Weeks

Nobody was more shocked than me about how fast Anthology came together. It started out as a proof of concept. I put together the basic UI to learn modern SwiftUI and SwiftData. I also wanted to see if the new WebPage API was up to the task. It took 4 or 5 days (I can’t remember) to get the basic UI together. Then I took a break from it to put Zavala 4.0 out.

Once Zavala was in Beta testing, I picked the project up again. I began using Claude Code to assist in getting it done. Once Apple integrated agentic programming into Xcode, I switched to that using Claude Agent. Man, what a force multiplier AI programming is. I hadn’t really given it much credence before and I was really shocked at how productive it made me.

I fleshed out the rest of the UI and persistence in no time. I didn’t vibe code it, but I sure used a lot of prompts. I quickly learned not to trust Claude to not litter my codebase with junk. I seem to have to remind Claude to clean up failed rabbit holes it likes to go down sometimes. I deleted Claude’s code and rewrote it. Sometimes I made Claude rewrite it. I did this for a couple of weeks. It felt a lot like working with a team of decent programmers who were good at taking direction.

Claude seemed to work best if it had an example to refer to. I used SwiftData CloudKit integration for syncing. It surprised me how well it worked to be honest. It still wasn’t good enough. It created duplicate articles and constantly got confused about if an article had been read or not. So I wrote a custom CloudKit integration for one entity and had Claude repeat the process for the rest. All in all iCloud syncing took about 5 days to get to 100%.

In the end, I would guess that Claude wrote about 80% of the code under my supervision. It’s not sloppy code either. I have no problem reading it. In fact it is better than a lot of human written code that I’ve reviewed and that used to be my job.

Is this the Future?

Will more people be writing complex pieces of software for just their personal use? Has AI lowered the effort and skill needed to create something really cool that much? I’m beginning to think so. Frankly, I had fun building Anthology. I enjoyed working with the AI. I could see myself doing something like this again. I think this is just the beginning of bespoke, personal software.

Building Zavala 4.0

I’ve been working on Zavala 4.0 off and on for the last 6 months. It isn’t a huge release or change in Zavala’s features. I think that is a good thing. I think Zavala is fairly mature as an outliner application. While there are things I would like to add in the future, there aren’t any really big outliner features that I think are currently missing and need to be added ASAP.

That said, there were some significant cosmetic changes and some good size changes under the hood in this release. So much so that Zavala requires the 26 versions of the operating systems to work. Zavala 4.0 is also not backwards compatible with previous versions of Zavala especially when it comes to iCloud syncing. Read on to learn why that is.

Fixing iCloud Syncing

Adding data syncing to any application is hard. iCloud helps with that somewhat, but it is still really hard to get right. Shoehorning a hierarchical data structure like an outline into a flat data structure like iCloud is super hard and I did not get it right the first time. While I did improve the reliability of the syncing code over the course of many Zavala releases, it was never quite right.

Corrupted Outlines and occasional data loss were the unfortunate result of not having syncing code 100% perfect. When dealing with synced data anything less than 100% perfect is unacceptable. This has been resolved in Zavala 4.0.

To do this I had to change how Rows are stored in the internal database as well as how they are stored in the iCloud database. This new solution works really well, but isn’t compatible with the old versions of Zavala. This is because the older versions of Zavala have no idea about the database changes and would corrupt the values if allowed to.

If I could have made this backwards compatible with older versions of Zavala, I would have. The best I could do is not allow Zavala 3.3.9 and 3.3.10 to sync with iCloud if a version of Zavala 4.0 had touched it. I did the best I could to prevent any data loss. Contact me using the Email Feedback option if you have problems. I will do everything I can to help you out if anything goes wrong.

Supporting Liquid Glass

Love it or hate it, Liquid Glass is the new design language from Apple for their latest operating systems. If you are an app developer and don’t support it, your app is going to look dated and out of place on the lates OS’s. Fortunately, I feel like Zavala is one of those kind of apps where Liquid Glass looks good and isn’t the worst at usability.

Unfortunately, it is very difficult to have a Liquid Glass version of your interface along side the legacy look and feel of previous OS versions. This is because you have to update to the latest API’s to correctly use Liquid Glass and some things, like the spacing of elements have been changed. Basically to keep backwards compatibility for OS’s before the version 26 ones, you need to maintain two different versions of the user interface code.

So I made the difficult decision drop support for previous versions of iOS, iPadOS, and macOS. This is particularly painful due to the fact that Zavala 4.0 can’t sync with Zavala 3.x or earlier. Basically you need to have all your devices up to date to use Zavala 4.0. My apologies.

German Localization

Outside English speaking countries, Germany has the most downloads of Zavala. This makes it the most likely candidate for localization.

The ability to do localization was added in Zavala 3.x by Stuart Breckenridge. Many thanks to him. Unfortunately, neither I nor Stuart are German speakers. I put out some feelers for someone to translate Zavala into German, but didn’t have any luck finding someone. So I turned to AI.

I used Claude Code to translate Zavala into German. I checked as much of the translations as I could and they look pretty good to me. Of course there may be mistakes in there that need to be corrected. I think the same would probably be true if I used a human for the translations.

Simplified Chinese Localization

We got a last minute contribution from SteveShi. He used some AI and did verified and corrected it himself. Many thanks to SteveShi for this contribution!

New Create Rows Setting

One way that various outliners differ is how they handle this scenario. You are at the end of a Topic line and hit return to create a new Row, but you have child Rows under the current one. Some outliners create the Row right after the current Row as a new child. This is how Zavala has traditionally done it. Some outliners create the Row at the same level as the current one, after the child Rows.

On the site Outliner Software I saw the user Satis mention that he found Zavala’s default behavior regarding creating new rows with child row confounding. After some discussion I saw things his way. I hesitate to add Settings to Zavala, but I think this one rises to the occasion. You can now specify which behavior Zavala does with the new Create Rows editor setting.

Zavala 4.0 is out now

Go and check it out. I’m pretty happy with this release and I hope you will be too.

Zavala 4.0 Beta Testing

Zavala 4.0 is now in Beta Testing. You can find out more about it in this post.

Zavala Will Always Be Free

My promise to you.

I have every intention of maintaining and updating Zavala for as long as I am able. I’m also committed to keeping it free. I have no intention of getting you hooked on using it and then starting to charge a subscription.

To show I am serious about this, Zavala is Open Source software released under the MIT license. This means that any other developer can take the years of work that I have in Zavala and make a competing outliner from it should I start charging for it. Given how small and competitive the outliner market is, I don’t stand much of a chance of making any money by going commercial. After all, I could be competing with my own past work.

What if I get ran over by a bus?

Since Zavala is Open Source someone could pick up the project and continue to update it. Worst case scenario, some enterprising independent developer could try to make a commercial product out of it. I don’t see much money in the endeavor, but others may see it differently.

Why don’t I charge for Zavala or accept donations?

Funny story. I fully intended to when I started writing it. After doing some competitive analysis on the Mac-only, outliner market, I realized there wasn’t much money there. There was so little in fact, that it isn’t enough for me to be motivated enough to do the business side when I’d rather be coding.

Let me break it down. Up front payments are a dead-end these days. I would have to add a free tier, in-app purchases, and maybe a subscription option to the app. That means more coding. Then I need to incorporate a business of some kind and do all the regular bookkeeping associated with it. That would be payroll taxes, quarterly and annual tax filings, etc… I used to own my own software consulting business and really don’t want to do that stuff again.

But if I thought I could make it up on volume, that might make it worth while, right? The simple truth is most computer users don’t know what an outliner is, much less how useful they are. Even those that do, rarely need to use one on a daily basis. Zavala is free and has been all the years that it has been available in the App Store and I couldn’t make it on the number of users I have now. That number would probably drop to about zero if I were to start charging. Could I get more volume by marketing Zavala? Sure, but that is another business thing that costs time and money, that I don’t want to do.

There is an upside to not having money involved when you write software. I don’t have to add features just to drive an upgrade cycle. With commercial software, you constantly have to deliver upgrades to keep a steady income regardless of if you are subscription based or charging up front. I don’t want Zavala to become bloatware. I don’t want to add features that I don’t believe add core value, just to keep the money coming in.

Same goes for donations. I don’t accept donations because I don’t want to feel obligated to implement a feature that a donor may want, but that I don’t think belongs in Zavala. I would rather accept feature requests on an equal basis from all users and decide which to implement on the merit of the idea, rather than who gave me money.

Why write Zavala at all?

I retired early after a successful career as a software consultant. I really liked writing software, I just didn’t always like the work I had to do. Now I have the freedom to craft software how I see fit and only work on projects that I am interested in.

The way I usually explain it is like this. Imagine you made furniture your whole life, but your employer only gave you pallet wood to use and half the time needed to make a piece. You were good at it and loved furniture, but were unfulfilled at your job until you retired. Now you can make furniture using walnut and take the time needed to make something you are proud of.

How can you help, you ask?

Please, please email me with bug reports using the Provide Feedback option under Help (in Settings on iOS). I take them seriously and fix them as fast as I can. I do test Zavala as rigorously as I can. Unfortunately it is the nature of software that a developer will never be able to predict every way that users will use an app. Production bugs do happen. The best we can do is squash them as fast as possible.

Zavala 3.0

It’s Live!

Zavala 3.0 is now live in the App Stores. There are only a couple of new major features in Zavala 3.0, but I put a lot of under the hood for this release. Lots of code was changed to modernize the code base and support new operating system features. For example, Zavala will support the Apple Intelligence Writing Tools when they become available in macOS 15.1 and iOS/iPadOS 18.1 (assuming you have a supported device).

The biggest change was adopting Swift Structured Concurrency. This should not be noticeable to the average user except that there should be fewer unexplained crashes happening now. The crashes themselves are rare, but I want using Zavala to be as good as it can be.

The new Group and Sort commands are nice to have, new features. As usual there were a handful of bug fixes too.

What’s next?

I’m honestly not sure what I should, if anything enhance next. I started out to build a good, simple outliner and I think that is what Zavala is now. One of the reasons that I wanted Zavala to be free and open source is so that I didn’t have to have a constant upgrade cycle to drive revenue. Basically, I didn’t want to add features I didn’t believe in just to make a living. That could lead to Zavala suffering from feature bloat. I’ve seen enough applications fall into that trap.

This doesn’t mean that Zavala is abandoned or not being maintained. I still plan on fixing bugs and taking feature requests seriously. Stuff that fits well in Zavala and doesn’t over complicate it will be added in minor releases, the same way that it was in the 2.x series. Apple evolves their platforms at an astonishing rate and developers have to modify their apps to keep up with it. I’ll stay on top of things, like Apple Intelligence, so that Zavala doesn’t get left behind and look or feel dated.

As always, if you find a bug or have an enhancement request, drop me an email. Heck, email me just to say, Hi. Since I don’t collect any user data, this is the only way I know that anyone is even using Zavala.

I updated a couple of my minor apps today so that they look correct on the latest macOS releases. The first, Feed Compass which is an app to help you find, preview, and subscribe to blogs. I also updated Feed Curator which is an OPML feed list editor. I also added multiple select for a Reddit user who was needing it. It felt good to help someone out and to finally update the appearance of these apps.

Drag Boat Race in Parker, AZ

About a month ago Nic and I went to drag boat races in Parker, AZ. The start of them is right outside the bar at Pirates Den. It was pretty fun. We got to drink beer all day and watch the races, then crawl to van at the end of the night to sleep it off. Mike Finnegan from Roadkill was there to race his new boat. That was pretty great for me. I’m a huge Roadkill fan. Finnegan basically made a movie about it and put it on Youtube if you are curious as to what the experience there was like.

If someone has to tell you how good they are at something, their work doesn’t stand on its own. If someone won’t explain why they made a decision, it is because they know that it was a bad one. If someone tells you that you aren’t worth listening to, its because they are afraid of what you will say.

The main project I work on, @NetNewsWire got a shout out in the Atlantic today. How to Take Back Control of What You Read on the Internet

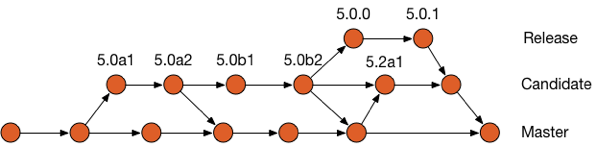

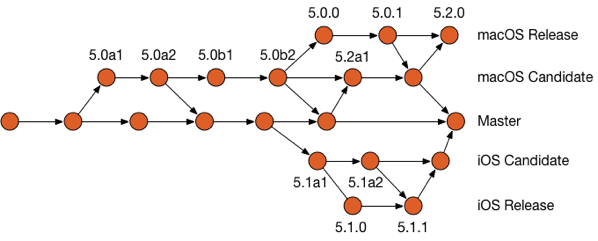

I messed up a @NetNewsWire merge recently. I left some Git merge conflict markers in a couple files. @danielpunkass suggested that a pre-commit Git Hook could prevent this in the future. So I made one.. Daniel has added this to his global Git templates. You should too.

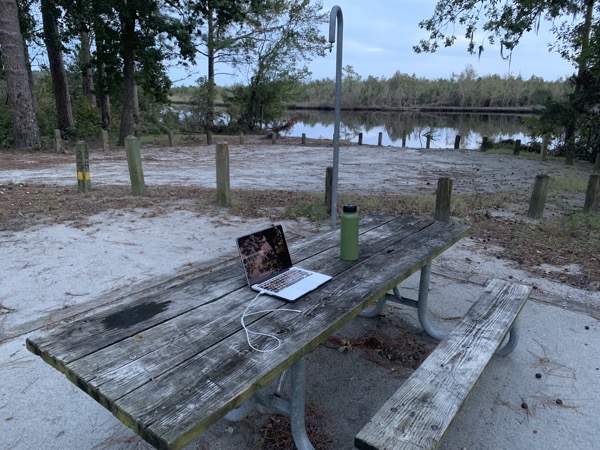

Just another day at the office.

I got new wheels and tires on the van. They look great, but the larger size puts more strain on my little 4.6 V8. I completely blew a spark plug out of the engine from pushing it to hard coming up a mountain pass. Life on the road…

Christmas dinner this year is Ramen on Sunset Blvd.

I really like Dan Rather’s Substack newsletter, Steady. It has some great social commentary on it. Dan Rather is a journalist from a time when news wasn’t entertainment. steady.substack.com

It would be great if Substack had RSS feeds for its Inbox and Discover timelines. I understand why they don’t. They really need to get users into paid subscriptions to keep the site going. I guess we should be glad that they at least have RSS feeds for individual newsletters.

Yeah. It’s one of those days.

I’d love to have Universal Links on the Micro.blog Apple apps. Since they are Open Source, I’d implement myself. I can’t see how it can be done. @manton rightly encourages everyone have a domain. That makes it impossible to do consistently.

Ventura’s System Settings

I have to admit, when I saw screenshots of Ventura’s new System Settings stuff, I was very unimpressed. It looked too much like you would see on Windows or Linux for me.

Now that I’ve actually used it, I appreciate the design decision that was made. I know a lot of people were critical, myself included, about basing the design of the System Settings on the iOS ones. But making it like the iOS ones reduces cognitive load when switching back and forth a lot more than I thought it would. I can find the setting that I want much easier now.

There is a lot to criticize though. It feels clunky, like it is missing some animations. For example, if you have the right pointing chevron, it should animate you that direction. I know that style of navigation sounds very iOS like, but the column view in Finder works that way too.

It also doesn’t feel like it fits in quite right with the rest of macOS. I haven’t read Apple’s HIG yet this year so that could be it. My guess is that we will see the rest of the move more this direction again next year.

Because so many more people own an iPhone than a Mac, making Macs easier for iPhone owners to use is a good strategy. Lots of the same people who are critical of recent macOS changes are also the same ones who are frustrated that Apple isn’t doing enough to grow the Mac user base. It looks like Apple is working to leverage the popularity of the iPhone and the iPad to win over users. Between that and the new Mac hardware, it looks to me like they are very focused on the success of the Mac.

I wrote a blog post about how I blog to Micro.blog using Zavala and Humboldt. Blogging this way probably isn’t for you unless you are big into outliners. Still it is neat to see how these two computer systems that weren’t designed to work together could be made to.

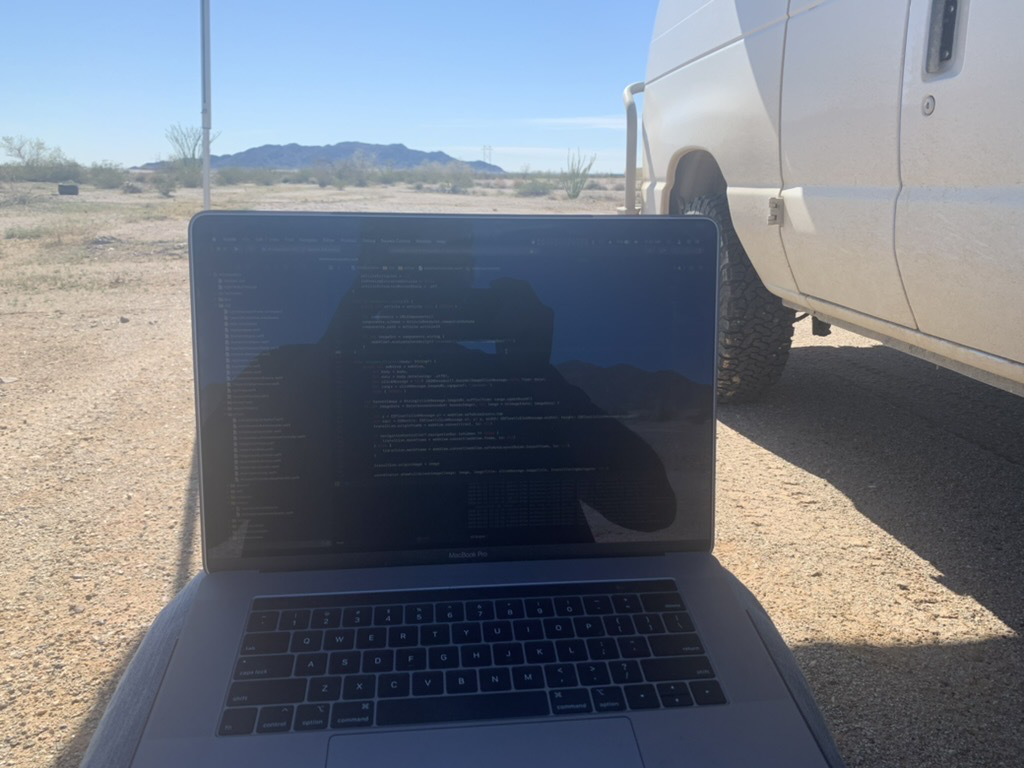

Software Development And Vanlife

Part of the year I live out of my self-converted E-250 Campervan. My wife and our 2 cats are also traveling with me. That doesn’t leave a lot of space for an office to do software development. This is how I do it.

My Portable Office

Wouldn’t it be great if you had a full office with full-size keyboard, monitor, printer, and the works while living in a van? No chance.

Desk

I wish I had a desk. My lap is my desk and when sometimes a picnic table. The picnic table is a rare treat since we mostly do what is called boondocking. That is where you don’t have any hookups for electric or water. Boondocking is typically done on public lands and is free.

Sometimes I sit in the passenger seat of the van. Right now, I’m sitting in the back of the van on my couch which converts into my bed at night. My favorite is sitting in a chair outside as long as the glare isn’t too bad.

Computer

I have one of the new 16” M1 MacBook Pro’s. It compiles code fast. Like, really fast. It makes working on big projects much less painful. The battery life is unbelievable. This is really important in a van when off the grid. Electricity is at a premium. I’m usually able to charge the laptop after the van’s batteries have been topped off and don’t need any more power. The MacBook will last with me using it as much as I want until the next day.

The speakers are also something that needs to be heard to be believed. We stayed at a friend’s condo in Redondo Beach for 3 weeks over the holidays. The whole time we used my computer as a television and the incredible sound capabilities made this much nicer.

Purse

Man purse? Computer bag? I’m not sure what to call it. It was a purse that I bought at REI.

I don’t have a conventional computer bag. A conventional computer bag is too bulky to fit in my limited van space. I have a sleeve for the MacBook and this little bag to hold my cables, headphones, computer glasses, and charging brick.

It works out pretty well. If I want to work in a coffee shop, I just through the purse over my shoulder and carry the computer in its case. The rest of the time everything fits into my clothing drawer with my clothes.

Internet

I use cellular data for most everything I do. I have 1GB per month of data on a Verizon plan. I never come close to using all of it.

The real tricky part is getting data while away from civilization. Most of the time I can just tether my computer to my phone and it is fine. Sometimes if I’m just out of reach of good cellular service I use my cellular booster in the van. I have a Weboost Drive 4g-X with a marine antenna. This will boost a weak, unusable signal into one that is very serviceable.

Recent Project Developments

I’m always working on something.

NetNewsWire 6.1

We’ve released development versions of NetNewsWire 6.1 for both iOS and the Mac. The blog post for the 6.1b2 version for the Mac has good info in it. The tentpole feature for this release is Themes.

Themes are the ability change how the Article is rendered in NetNewsWire. This can be a matter of personal taste and sometimes a matter of accessibility. For example if a font is pretty, but difficult to read, you can change that font so that to one that is easier to read. You can also change Article colors, sizes, and key field placements. All this is pretty easy to do if you have basic Web development skills.

NetNewsWire 6.2

The NetNewsWire team will occasionally work on multiple releases at the same time. This time we are testing version 6.1 while developing version 6.2. For version 6.2 we plan to finally add FeedWrangler syncing for macOS and iOS.

On iOS, we’re also updating the user interface to look more at home on recent version of iOS. My NetNewsWire teammate, Stuart, and I have been hard at work on the iOS UI refresh. I’m pretty happy with the progress thus far. Stuart has also contributed a Notification manager that will make working with Notifications much easier.

Zavala 2.0 Release

I managed the second major release of Zavala while working out of the van in the desert close to Holtzville, CA. This release is a big deal to me. Thanks to Brad Ellis and some elbow grease from me, this release really feels professional. I mean, it feels like there is a real budget with several team members from a good company kind of professional.

The big deal with this release is Shortcuts support. This really makes Zavala much more customizable and powerful. I hope the users end up finding this useful. I use it all the time, from backing up my outline database, to posting to different blogging systems.

Vanlife is Often Boring

What you don’t often get from Instagram #vanlife stuff is that there are lots of times that you have nothing to do. If you are off the grid for 4 or more days at a time with nothing but scrub brush and sand, what do you do? I’m fortunate enough that my hobby, software development, is still possible even in those conditions.

Zavala is a modern outliner for Mac, iPhone, and iPad. Version 2.0 has just been release with a refined user interface and support for Shortcuts. Read all about the 2.0 release.

This is awesome: blog.iconfactory.com/2021/12/n…

Radford Racing School

Cars

I’ve always loved fast cars. Most of my adult life has been focused on being the best software developer that I can be, so my other hobbies, like cars, suffered. Now that I am retired, I did some life reassessing. One of the things that I feel like I missed out on was enjoying cars more. I decided that I wanted to do more than just zip around on the street. I wanted to race cars on a track.

I don’t have any professional aspirations to become a race car driver. I just want to do my best to win and enjoy the process. I decided that I would race the car I have rather than buy or build another one. I have a 1971 Corvette that has had the suspension, brakes, engine, and transmission all updated. There is a little more work that I need to do to it to get it ready for autocross or road racing, but I think it will be a good starter car.

Racing School

Since I know very little about road racing, I figured that I should go to a school to learn more. Radford Racing School came up immediately when I began searching for a racing school. I had a bit of good luck. They were having a Black Friday sale on classes and they were located in Arizona, which is where we were headed for the winter. I booked a 3 day high performance driving course almost immediately.

I probably wouldn’t have gone if not for the Black Friday sale, just due to the cost alone. After going to Radford, I get why it is so expensive. They have two full-size race tracks, a big autocross course and a couple skid pads. There is a large full-time staff of instructors, administrative people, and mechanics. Their fleet of cars is massive and requires constant maintenance. They go through gas and rubber like nobody’s business. I think the school is actually reasonably priced for what you get.

Classes

We had some class room time where fundamentals were taught us. Things like where to look and how weight transfer affected traction were some of the subject we were taught. There was surprisingly, little time spent behind a desk. Most of our time was behind the wheel.

One of the first and most important things you learn is accident avoidance. We talk about it in class and they run you through a series of drills to make sure you can pull off the maneuvers. Some of the maneuvers were pretty hard. Braking at 65 MPH while swerving to miss an obstacle was quite challenging in the distance they gave you.

We did a lot of autocross to hone our skills before hitting the track. I didn’t think I would enjoy the autocross stuff as much as I did. Racing your car through cones just didn’t seem like a lot of fun, but I was wrong. It might be something that I try and get the Corvette out to do next summer.

We also got a a lot of driving time on the full size race tracks. I had the time of my life. We also got some good one-on-one time with the instructors who would both take you for ride alongs and would ride with you. There is nothing better for learning than to be in the same car with someone who really knows how to move that machine around.

Was it worth it?

Yes, in fact I probably will go again. They offer more advanced courses that go beyond what we did. I was learning new things right up till the very last minute and know that I have more to learn. First though, I’m going to take what I learned and refine it. Next summer I hope to get to do some road racing where I have a chance to develop myself and maybe then I can get more out of a follow up course.

Vanlife 2021

2020 Sucked (For Everyone)

We got back to Centerville, IA just as the world was entering lockdown in March of 2020. We were pretty strick with out protocols, so we didn’t even much leave the house until vaccines became widely available. Needless to say, there wasn’t any van adventuring happening, but Nic got really good at making sourdough bread and I wrote an outliner application.

I’ve got no regrets about how we spent 2020 and most of 2021. It was hard, but my parents are getting up there in age and Nicole is an ex-smoker. Covid could have taken anyone of us or left us with a permanent disability. Thus far it hasn’t and I will do what I can to keep it that way.

Covid and Vanlife

We spend a lot of time isolated and outside while living out of the van. I think most people focus on that and don’t realize how much time we spend in public places while traveling. The most common public place we go to is the gym. That is our main place to shower.

We decided that since we were vaccinated and boostered that it would be safe enough for us to get back to using the gym again. But just in case we got some variant that is vaccine resistant, I installed a shower on the van so that we could avoid the gym if we needed to.

It holds 7 gallons of water and is black so that the sun can heat it. You pressurize it using compressed air.

We used it for the first time yesterday. I used my onboard air compressor to give it pressure. Thankfully the shower has a pressure release valve. I over compressed it (after only a minute) and it shot water two feet above the top of the van as I frantically tried to shut it down. Afterwards Nicole successfully used it to wash her hair. The campground we are currently at in Kearney, AZ doesn’t have any showers and we are a long way from a gym, so the new shower immediately proved itself.

Vintage Campers

Backtracking a bit, we stopped at a really nice campsite that had restored, vintage campers and some cars in Albuquerque, NM on our way to Arizona. After getting settled there, we immediately grabbed a couple beers and toured it.

Radford Racing School

Our next stop is in Chandler, AZ and the Redford Racing School. They have a 3 day performance driving course that Nicole and I are going to take. We are really looking forward to the adventure. I’m very much a car guy and Nic just likes to drive fast. We’ll be driving Challenger Redeye’s that have almost 800 HP. Those cars have two different keys. You have to have a special one to unlock the full engine potential. I doubt we get that key right away, but hopefully by the end of the course we’ll get to open those Mopar’s up.

Feels Good

We’ve been out for about a week now and are finally getting back in the groove and it feels good. Everything is a lot more work, but the routine becomes the norm, and soon we notice it less and less. Eventually, it will seem like this is how we’ve always lived and we will have to work to remember what it is like to live in a normal home.

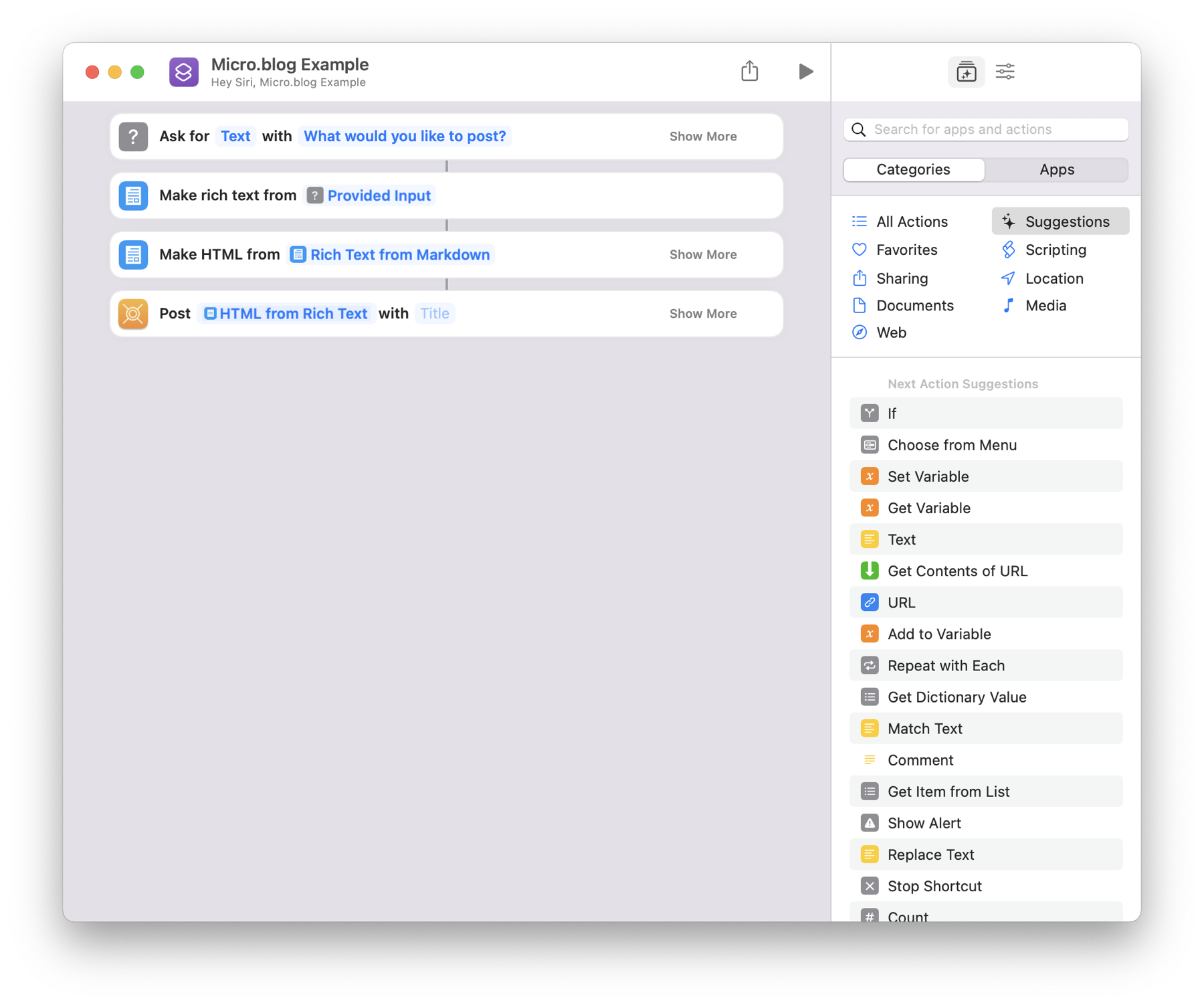

Shortcuts for Micro.blog

Announcing Humboldt

Humboldt is a new Open Source project I put together so that I could use Shortcuts to post to Micro.blog. Humboldt is built on top of Snippets from Micro.blog, which does the heavy lifting. Humboldt only exposes a small portion of Snippets, so there is room for Humboldt to grow in the future if there is demand for more functionality.

Humboldt is available for both macOS and iOS in the App Store.

The Main Humboldt App

The main app walks you though the usual Micro.blog sign-on flow. You just enter the email address you use for Micro.blog and wait for your sign-on email to arrive. When it does you just click a link in the email and you will be routed back to Humboldt which completes the sign-on for you. That’s all the main app does. You are now ready to begin building Shortcuts that communicate to Micro.blog.

Post HTML to Micro.blog

The main shortcut to use is the Post HTML one. You can submit just a short HTML snippet or a full blog post. The Title is an optional parameter, since lots of Micro.blog posts are short and don’t require them.

Here is a simple Shortcut that just asks for what you want to post and then does it. You can use this Shortcut completely hands free to post to Micro.blog.

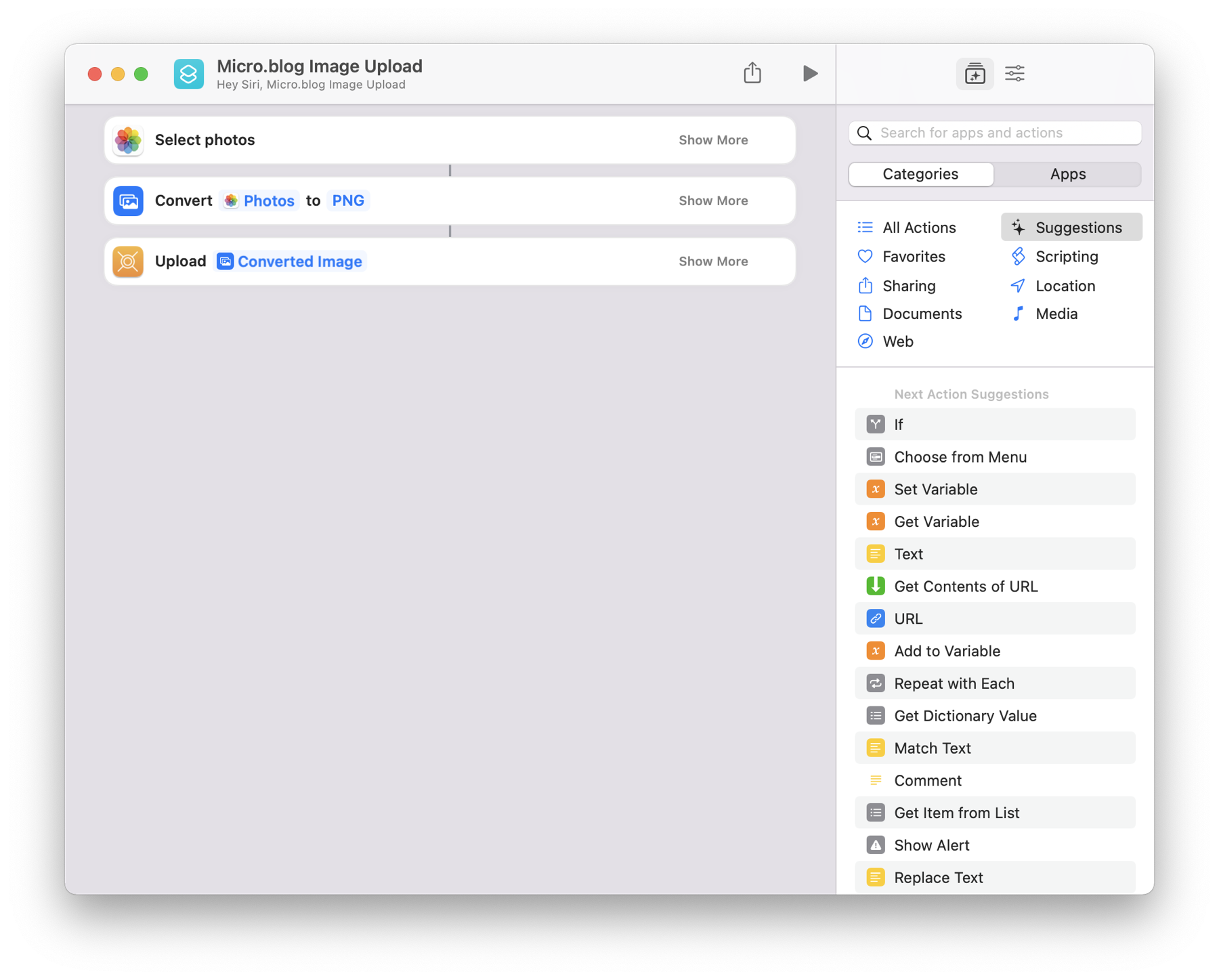

Upload Image to Micro.blog

You can also upload image resources to Micro.blog. This Shortcut example shows it in action.

Get Blog ID from Micro.blog

This is useful if you have multiple Micro.blog blogs associated with your account. You can pass the result of this action to either Post HTML for Upload Image to work with the blog you want. Otherwise, those actions work on the currently selected default blog. You can change the current default blog using Micro.blog on the web.

Putting Them Together

You can upload blog posts with images embedded in them. In fact, that is how this blog post was uploaded to Micro.blog!

The Upload Image action has an output parameter. It is the published URL of the image. You need to save this for each image you upload and pass it to a step that manipulates the HTML of the post you will upload.

Your HTML needs to have placeholder text, unique to each image, that can be searched for. This placeholder text needs to be substituted for the image’s published URL. You can use the Replace Text built-in Shortcut action to do this. Now you can use Post HTML to post your updated blog HTML.

Zavala Integration

I wanted the ability to build a blog post in Zavala and then post it to Micro.blog. This is the original reason why I built Humboldt. Shortcut support for Zavala is “coming soon”, which is needed to tie everything together. When it is released to testing, I will post more about how to make Humboldt work with Zavala.

Future Updates

For now, Humboldt does everything I need it to do. It just posts to Micro.blog and that is about it. It can’t edit or delete posts or manipulate image uploads. You can use the Micro.blog website for that or a full featured blog editor like MarsEdit.

If there is community interest in expanding what Humboldt can do, I’m interested in hearing about it. Let me know what you would like to see on the Humboldt’s GitHub Discussions site.

Drummer

The Oldest and Newest Thing in Blogging

Dave Winer just release a new project called Drummer. Drummer is an outliner that has been especially adapted to doing blogging. If you are into either outliners or blogs, this is an interesting development. Dave is probably the oldest name in outline, blogging, and podcasting applications. You can check out his Wikipedia page for a full history.

I’m not very familiar with the history of outliners and blogging, but I do know in certain circles, especially the Mac community, early blogs were based on outlines. Over the years it seems like those two have drifted apart into their own applications and communities.

Personal Interest

I stumbled into the blogging world long before I had heard of blogs. In the very early 2000’s personal blogs weren’t very well known, but sites like Slashdot were. Slashdot was what we would now refer to as a link blog. A link blog is one that links to external articles and provides commentary about them. I decided I wanted my own link blog and so I built one based on the (at the time) open source Jive forum software. The site itself never turned into anything, but it got me my first Java consulting gig.

Like many people I eventually drifted away from blogs into the world of social platforms. I eventually got interested in doing Apple platform development and started looking for a good open source application to work on. I found NetNewsWire. I originally began work on NetNewsWire because the code was a good model to learn advanced techniques from. Along the way I rediscovered how amazing blogging is.

Through Brent Simmons, the founder of NetNewsWire, I learned about how useful outliners could be. I was familiar with outliners, but hadn’t used them to their full potential. I got more and more interested in outliners and eventually began work on Zavala. Through Brent, I also learned about publishing outlines as blogs.

Outlines as Blogs

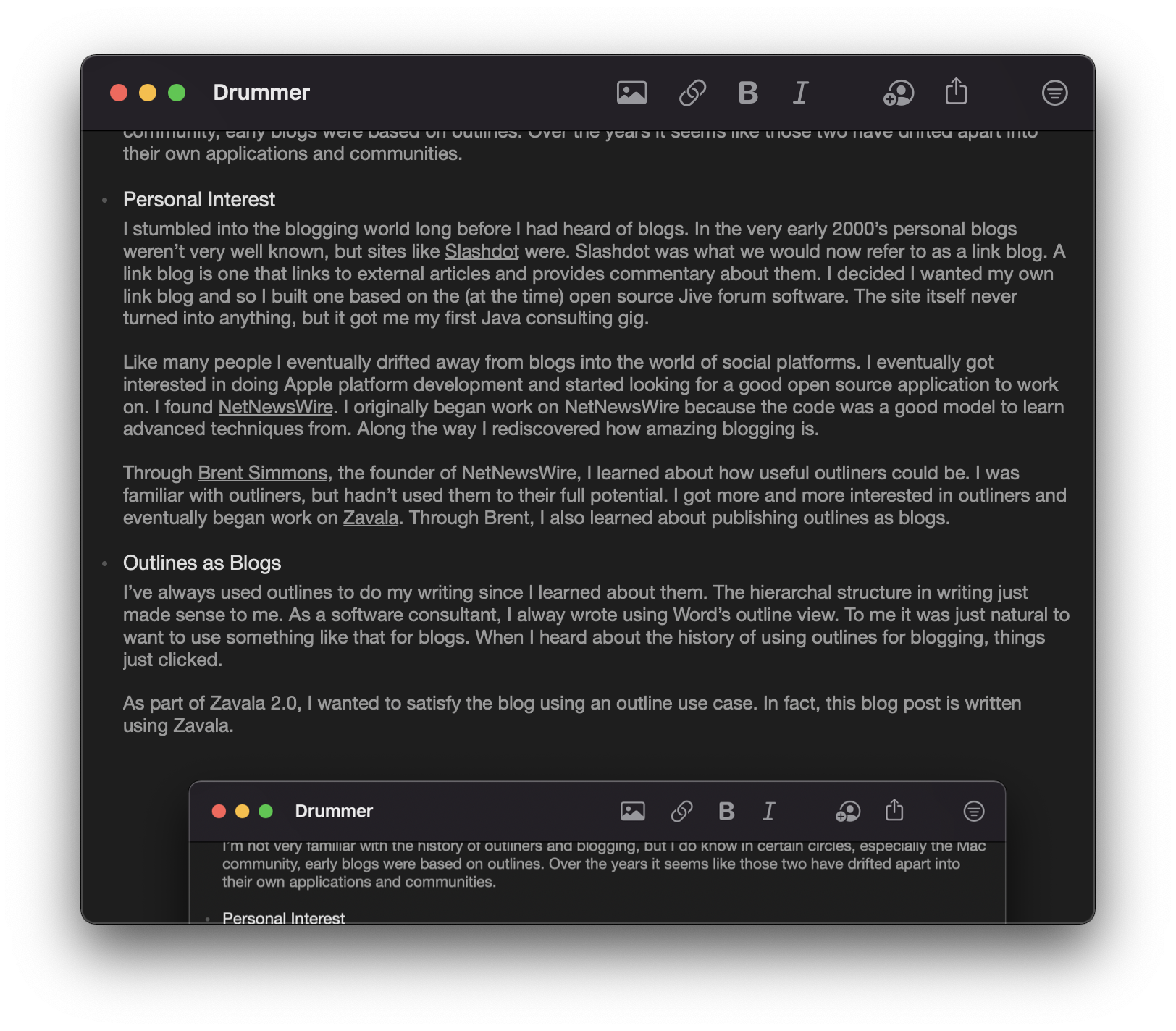

I’ve always used outlines to do my writing since I learned about them. The hierarchal structure in writing just made sense to me. As a software consultant, I alway wrote using Word’s outline view. To me it was just natural to want to use something like that for blogs. When I heard about the history of using outlines for blogging, things just clicked.

As part of Zavala 2.0, I wanted to satisfy the blog using an outline use case. In fact, this blog post is written using Zavala.

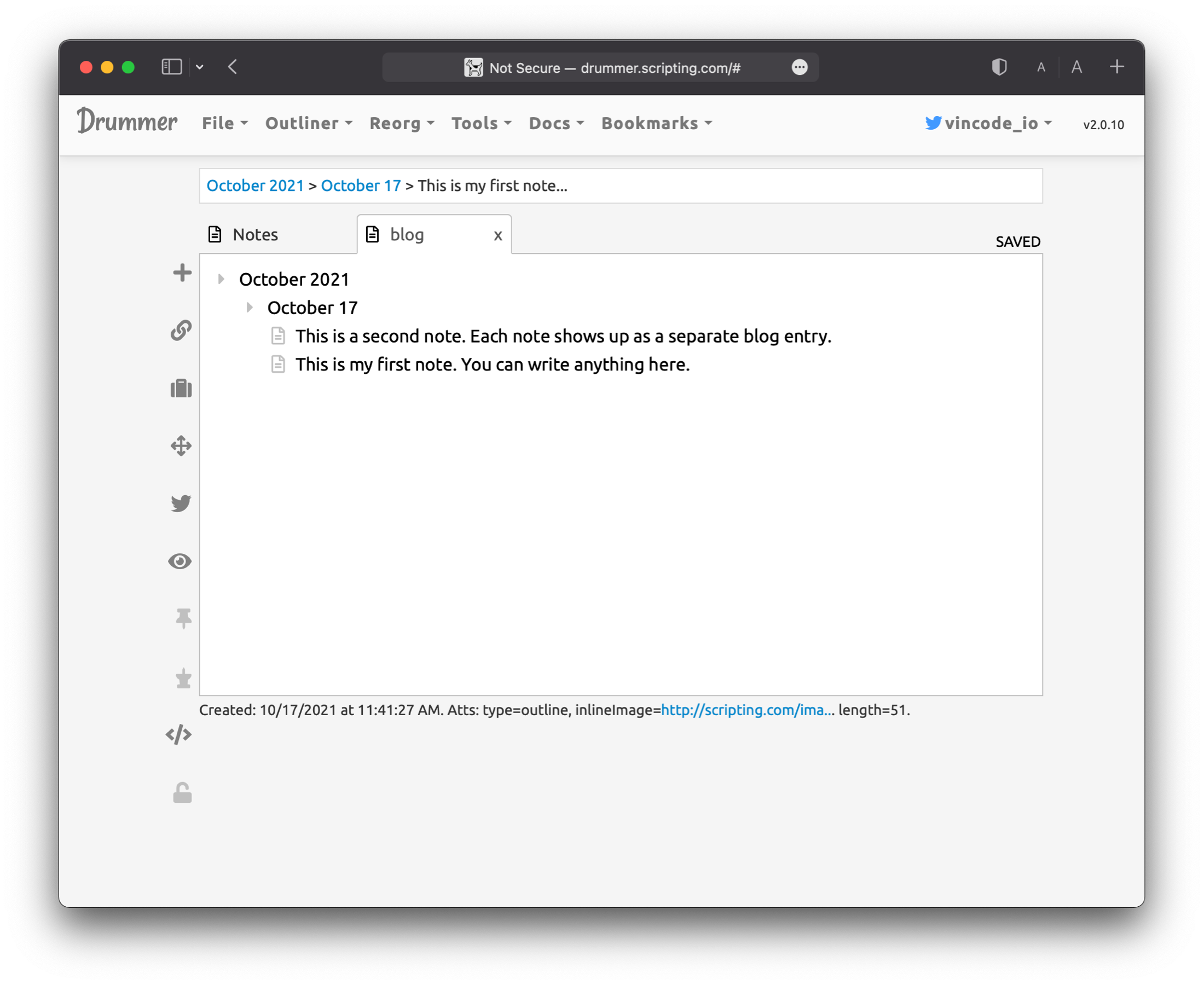

To publish this outline, I run a Shortcut that uploads images and HTML to my Micro.blog blog. Drummer works somewhat differently. Zavala considers an outline a document that gets uploaded as a post. Drummer is designed to group multiple entries under a published date. Each entry is a separate blog post, but are all grouped together by date. It also has a publish step, but what happens, happens behind the scenes.

To publish this outline, I run a Shortcut that uploads images and HTML to my Micro.blog blog. Drummer works somewhat differently. Zavala considers an outline a document that gets uploaded as a post. Drummer is designed to group multiple entries under a published date. Each entry is a separate blog post, but are all grouped together by date. It also has a publish step, but what happens, happens behind the scenes.

It is important here to point out some terminology differences. A “note” in Zavala is associated with a row. It provides additional detail for the topic. When publishing docs or blog posts from Zavala a topic is a group heading and a note is paragraph text.

It is important here to point out some terminology differences. A “note” in Zavala is associated with a row. It provides additional detail for the topic. When publishing docs or blog posts from Zavala a topic is a group heading and a note is paragraph text.

A “note” in Drummer is a row that is a blog entry. I’m not sure what makes a “note” row different than a regular row under a date. They seem to publish the same, but the icon is different some how.

Drummer has the concept of a microblog post, which Zavala doesn’t have. This is similar to what you see on Micro.blog or Twitter. It is a short post that doesn’t have a title. If you add a child row under a row that is under a date, you get a full blog post where the child rows are paragraphs and the row is the blog title.

Drummer’s take on blogging is a little bit different different than the common definition. I think Drummer is set up well for a prolific writer or someone who posts a lot in smaller chunks through out the day. It scales up well for long posts or quick posts just to express an opinion as well through.

What’s next

If I’ve sold you on blogging using outlines, check out this nice article on blogging with Drummer. You will eventually be able to do blogging on some platforms with Zavala 2.0, but that won’t be ready until early 2022. In the meantime, Drummer is something to try.

Some caveats about Drummer though. You will have to think differently if you are used to common forms of blogging. Using a single outline for all your blog entries is very different than publishing different documents as blog entries.

Drummer is also complicated in some ways. For example, adding an inline image requires you to edit a rows attributes, enter a special key, and link out to a site that is hosting your image. Drummer is new though and it is’’t like this is something that can’t be made easier in a follow up release.

I’m going to be keeping an eye on Drummer for things I can learn from it. I’ll also be looking for opportunities for interoperablility. There might be something there that can make both projects better.

Shortcuts

I’ve been spending a lot of time lately working with Shortcuts. I have some thoughts about them.

Adding Shortcut support to Zavala

One of the early requirements for Zavala was that it should be able to be automated or scripted. Since Zavala works on both iOS and macOS, these scripts should be able to be shared.

Prior to WWDC21 there wasn’t a shared scripting environment common between iOS and macOS. AppleScript was macOS only and Shortcuts was iOS only. I eventually decided that I would embed a Python runtime and editor to get cross-platform scripting to work.

When Shortcuts for macOS was announced at WWDC21 this year, I now had a different option. My only concern was if Shortcuts would be powerful enough to script a full outliner.

Discovering Shortcuts

I had briefly worked on Shortcut support a couple years ago for NetNewsWire but we ran into some roadblocks. These have since been removed. Still, because of them we didn’t get the full Shortcuts support we would have wanted and I didn’t fully learn how to use Shortcuts.

Cut to a couple years later and I wanted to do some really powerful automation with Zavala. For example, I wanted to post outlines as blog posts on both Micro.blog and a Jekyll blog. I wanted to dynamically create outlines.

I’m happy to say that with some enhancements to Zavala to support Shortcuts, I’ve been able to do everything (thus far) that I’ve set out to do. I’ve written over a dozen Shortcuts, some of them fairly complicated. I was surprised at what I could accomplish with a graphical programming language.

Shortcuts are Very Capable

I’ve come to think of Shortcuts as the graphical programming equivalent of Unix shell scripts. You are expected to piece together a lot of small Shortcut actions to make a full Shortcut. Each of the Shortcut actions take an input and provide an output.

One of the big differences between Shortcuts and shell scripts is that you can define the type of data that you are using for input and output. Shortcuts has something called The Content Graph engine which can convert data to different types on the fly, so using typed data isn’t as verbose as it could be. For example, you could pass an array of URL’s to an action that only accepts a single URL. Shortcuts will automatically pick the first (usually the only) item in the array without having to specify it. Or you could pass a URL to an action that takes a Text input and Shortcuts will change it to a text input.

Shortcomings

In iOS 15 and macOS 12, the Shortcut editor is buggy. Embarrassingly buggy. The delete button for actions almost never works. Yes, something as simple as a button not doing the simplest of tasks, doesn’t work. And it crashes a lot. Fortunately, the execution engine for Shortcuts seems to be stable and work well.

One thing I would like to see is the ability to add a Shortcut to the Share menu on macOS. You can already add it to the Share menu on iOS. You can add a Shortcut to the Services menu on macOS, so maybe that is the more appropriate location. I do think users are going to expect Shortcuts to be able to be available in the macOS Share menu since it shares an icon with the iOS equivalent.

The biggest thing I think is missing is an API to execute Shortcuts directly from within your application. Say, I write a Shortcut that moves all completed items in an outline to a row called “Done” at the bottom of the outline. I would like to be able to execute this Shortcut without leaving the application and without focus being given to the Shortcuts app. Right now that isn’t possible to the best of my knowledge.

The Future

It is pretty clear to me that Shortcuts are the future of Apple platform automation. It is powerful while still being accessible to non-programmers. I hope that this year’s quality problems are a short-term setback, because I’m all in for automating Zavala using Shortcuts.

Some cool stuff is coming in Zavala 2.0. zavala.vincode.io/2021/10/0…

My outliner, Zavala, has a new release in beta testing. There are lots of new features and I sure could use some help testing it. zavala.vincode.io/2021/09/2…

Here’s a great article about @NetNewsWire with some excellent quotes from @brentsimmons. It really gets into how Brent and the team think about NetNewsWire’s place in the world. www.lifewire.com/how-netne…

Zavala 1.0 released

For the past 8 months or os, I’ve been working on a new app called Zavala. It’s a modern take on a traditional outliner app.

I think it is a pretty decent app, considering it was developed by a single person over 8 months. It isn’t easy making an app that runs on 3 different platforms and does syncing and collaboration across those platforms. To give credit where due, it wouldn’t have been possible without some recent enhancements to Apple’s API’s. Catalyst and CloudKit are big advantages that I have that developers 5 years ago didn’t have. Check out the full feature list here.

One thing I am particularly proud of is how the UI is animated. Adding and removing rows isn’t jarring and gives a solid feel to the application. For example if you are collaborating on an outline with another user, if they insert a row, the outline opens where the row goes and puts it there. There is never a situation where everything just blinks and stuff is in different locations.

The next version, 1.1, will support images embedded into the text of the outline. It will also support some other commands that other outliners usually support. I’ll be adding keyboard shortcuts for moving a row up or down in the outline. I’ll also be adding a way to add rows below, either indented or outdented. I hope to have this release out by Fall.

Version 2 will be coming much later and will require macOS 12 and iOS 15. This is so that I can take advantage the latest in automation API’s. With Shortcuts now on the Mac, the path forward for automation on Apple’s platforms is clear. I plan to make Zavala’s Shortcuts capabilities very robust so that custom workflows and integration with other apps is first class. I’m very excited to see how users automate Zavala in the future.

If you are into outliners, you should give Zavala a try. It is free and Open Source and will be forever, so you have nothing to lose. If you are into task managers or note taking you should give outliners a try. You might be surprised at how creating hierarchies of that information is useful.

Sourdough Bread

A lot of people have taken up sourdough bread making during the pandemic. My wife is one of those. She’s gotten pretty good at it. Behold her latest creation.

The open source outliner, Zavala, that I have been building now has a website and is available for beta testing. Check it out. zavala.vincode.io

Keeping Busy

Update

I thought I’d let people know what I’ve been working on lately. I’ve been keeping busy!

NetNewsWire 6.0

My main project that I’ve been working on for the last couple years is NetNewsWire. This project is amazing to work on. The community that Brent Simmons has created is great. The popularity of NetNewsWire is great too. It really helps get you motivated when so many people benefit from and appreciate your work.

For the 6.0 release cycle I did the Big Sur UI refresh, iCloud syncing, and the Reddit and Twitter extensions. I also finished up the work that another developer had started on the Reader API code. That got us BazQux, The Old Reader, Inoreader, and FreshRSS syncing. I did some other small things for 6.0 too, like the Share Extension.

Lots of other developers contributed to 6.0 including in big ways like implementing NewsBlur syncing and other UI changes. NetNewsWire 6 is a big deal for the team. I hope you check it out and give it a try.

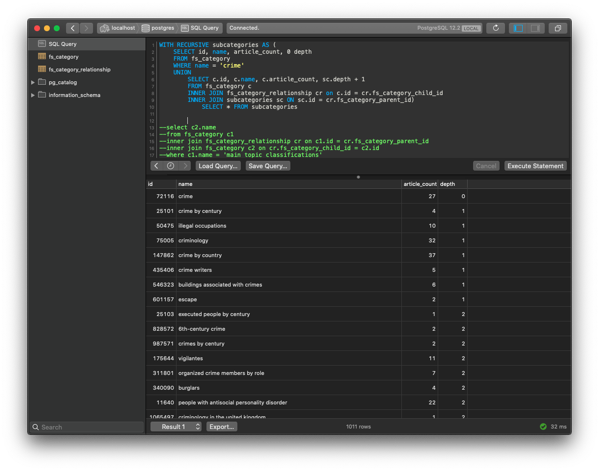

Feed Spider

This project has been on hold for about 6 months. I’d still like to tackle the feed discovery problem. For those not familiar with Feed Spider, I was working on creating a searchable blog directory. Look through my back posts in this blog for details.

I still want to tackle this again. I paused my work on it to learn more about Machine Learning, but then I got distracted with building an outliner application. I’ll probably work on this more in the coming year as a way to mix up my development work and not get bored.

Zavala

I built an outliner application called Zavala. This is a fun project. I’m a bit of an outliner novice and didn’t use one on a regular basis. Now I use one constantly and for all kinds of different things. Organizing my thoughts, planning and managing projects, and even just as a TODO manager.

I just released the first Alpha builds of Zavala. That means that from here until the release, I’m only fixing bugs. No new features are planned for the 1.0 release. This shouldn’t take up much time and I’ll probably work on some other things in the meantime.

What’s Next

I’m not sure what’s next. There are some things I would like to see in NetNewsWire 6.1, so there will be some work done there for sure. I’ll work on small features for Zavala to fill in the time. Maybe I’ll even get to spend some time on Feed Spider.

And we can’t forget that WWDC is coming. After Apple releases its updated frameworks, there is always updating that has to be done to existing applications. That often takes up a good chunk of an Apple developer’s summer. Maybe by Fall things will be slowing down for me. Then again, I’ll probably just find something new and shiny to start chasing.

My wife’s best friend is #1 & #2 in Amazon’s Women in Politics category! She’s beating out many political big names. https://www.amazon.com/gp/new-releases/books/5571264011/ref=zg_b_hnr_5571264011_1

My Uncle Terry

Last February, Nicole and I were wintering in Arizona. We were down there living out of the van, like we normally try to do in the winter. We were fairly close to my Uncle Terry had a winter home in Yuma, AZ, so we went to visit him and stayed just across the border in California on some BLM land.

It was pretty much just desert there, but that didn’t matter. We were going to be spending our days with Terry and his partner Irene. They took us to see the Yuma Territorial Prison and went out to eat at all their favorite spots. We had a blast visiting with them and catching up.

Uncle Terry died today from complications from COVID-19. He was older, but in otherwise good health until the virus. When last I saw him he was just as sharp as I’d ever seen him mentally. He was taken before his time was up.

I’m going to miss that man. We didn’t spend enough time together over the years and I regret that. Terry was a great story teller and always so much fun to be around. There was always an abundance of laughter when Terry got on telling stories. He also loved cars and collected them, so he and I never ran out of things to talk about.

My family is heartbroken. We’re heartbroken and also angry. It didn’t have to be like this.

Privately owned firetrucks

I was reading a blog post about firetrucks and it reminded me of a #vanlife post I never got around to writing.

In late January, 2019 we were traveling around the U.S. and ended up on Padre Island. Padre Island is just off the coast of Texas. You can drive to the island over a bridge and can camp for free most anywhere you want to along the beach. Even in the winter it is warm there and quite beautiful if you like the ocean.

As beautiful as it is, there isn’t much to do except watch the ocean. So a couple times a day, I would walk up and down the shore line, and sometimes see some wildlife.

People were well spread out along the beach. On my walks I got to meet lots of interesting people with all different kinds of mobile living arrangements. By far the most interesting was the firetruck conversion.

I’d walked by this contraption several times before I caught the owner outside showing it off for someone and could discuss it with him. He was a former boat builder that had a fascination with firetrucks.

The rig is part firetruck, part boat, and part tow truck. You can clearly see the boat part of the vehicle that has been added to the firetruck. There is also ramp (extended in the picture) that can load and carry a car.

I’ve seen a lot of unique builds while traveling and this one is definitely the most unique.

Feed Spider - Update 8

I’ve been doing a lot of reading and a lot of soul searching lately. Having to dig deep into Machine Learning wasn’t on my 2020 list of things to do. I’d really planned to spend this year improving my Apple platforms developer skills. Learning Python and a bunch of new concepts is a real detour for me.

To better understand if the Machine Learning was something I wanted go ahead with, I did some research on how much education you needed to get into it. It turns out there is quite a bit to it, but there is also a good deal of overlap with my existing skillset. For example, my business programming career left me with lots of skill manipulating and cleansing large amounts of data programmatically. That and being able to program at all are good starting points.

The other big prerequisites are math. Specifically linear algebra, statistics, and probabilities. I used to know how to do that stuff, but that was 30 years ago. The good news is that the Khan Academy has courses that I can take for refreshers. All this Machine Learning stuff is within my reach.

I’ve decided to go ahead and get proficient with Machine Learning. It is a skill not too far out of my grasp. Besides, I need something to do.

Coursera has a Machine Learning course that they are giving away during the pandemic. Coursera looks like a great way to get your credit card charged for classes you haven’t taken or signed up for. At least that is what the online reviews say. Needless to say, they won’t be getting a credit card number from me. I am going to take the free class. I’m dubious as to how good it will be, but as long as it isn’t giving my outright misinformation, I think I’ll be ok.

After that, I’ll be taking the Khan Academy classes to get my math back up to par. In the mean time, I’m following the Towards Data Science blog. They put out lots of good material and the more I read of it the more I’m beginning to understand.

All of this will take some time. If I make any progress towards Feed Spider, I’ll blog about it. Don’t expect much for a while though. 🤓

Feed Spider - Update 7

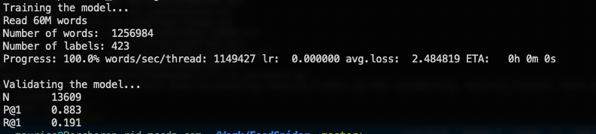

I made two changes in my latest run. I probably should only make one change at a time to be able to narrow down what is helping and what is setting me back. Still, I went ahead and moved down one more level for the targeted categories. This gave me a lot more categories that would come up. My second change was that I only selected the categories that were closest in relationship to the article category. The net effect of this I estimate to be, more categories and fewer category labels per article.

I ran the processes needed up to the supervised training. That I started and took a 4 hour break while it ran. I should have waited around and checked the ETA for completion before taking my break. When I got back to it, there were still 11 hours of training remaining.

I wouldn’t consider it a big deal just to go and do something else for 11 hours, except that the supervised training was using 100% of all my CPU’s on my work computer. I’m impressed with how responsive macOS stays while under that kind of load. If all I wanted to do was some light work, I could just let it run. What I really wanted to do was do bugfixing on NetNewsWire while it ran. Compile times are just too frustratingly long while the rest of the computer is maxed out.

I figured it was finally time to spin up an on demand Amazon instance. After doing some superficial research, I decided to give Amazon’s new ARM CPU’s a go. They have the best price to performance ratio and since everything I’m doing is open source, I can compile it to ARM just fine.

The first machine I picked out was a 16 CPU instance. I got everything set up and started the supervised training. It was going to take 10 hours. Not good enough, so I detached the volume associated with the instance so that I wouldn’t have to set up and compile everything again. I attached the volume to a 64 CPU instance and tried again. 10 hours to run. I checked and was only getting 1200% CPU utilization.

I’d assumed that fastText was scaling itself based on available CPU’s since it was sized perfectly for my MacBook. I have 12 logical processors and it was maxing them out. It turns out that you have to pass a command line parameter to fastText to set the number of threads for it to use. 12 is the default and by coincidence matched my MacBook perfectly.

I restarted again using 64 threads this time, expecting great things. Instead I got NaN exceptions from fastText. Rather than dig through the fastText code to find the problem, I took a shot in the dark and started with 48 threads. That worked and had an ETA of 3 hours. A 48 CPU instance is the next step down for Amazon’s new ARM CPU instances, so that is where I’ve settled in for my on demand instance.

As a sidebar, I would like to point out that this is a pretty good deal for me. The 48 CPU instance is $1.85/per hour to run. I’m not sure how to compare apple-to-apples with a workstation, but to get to a 40 thread CPU workstation at Dell is around $5k. Since, I’m primarily an Apple platforms developer, I wouldn’t have any use for it besides doing machine learning. It would mostly be sitting idle and depreciating in value. I would have to more than 2500 hours of processing to get ahead by buying a workstation. That’s assuming that a 5k workstation is as fast as the 48 CPU on demand instance, which I doubt it is.

After 3 hours, the model came out 70% accurate against the validation file. That’s pretty good, but what about in the real world? Pretty shitty still. Here is One Foot Tsunami again.

The new model simply doesn’t find any suggestions lots of time. See the “Pizza Arbitrage” above. The categories that it does find, kind of make sense? The categories are trash for categorizing a blog though.

One of my assumptions when starting this project was that Wikipedia’s categories would be useful for doing supervised training. I really don’t know if that is the case. How things are categorized in Wikipedia is chaotic and subjective. You can tell that just from browsing them yourself on the web. My hopes that machine learning would find useful patterns in them is mostly gone.

It is time for a change in direction. Up until this point, I had hoped to get by with a superficial understanding of Natural Language Processing and data science. I can see know that won’t be enough. I’m going to have to dig in and understand the tools I am playing with better as well as think about finding a new way of getting a trained model to categorize blogs.

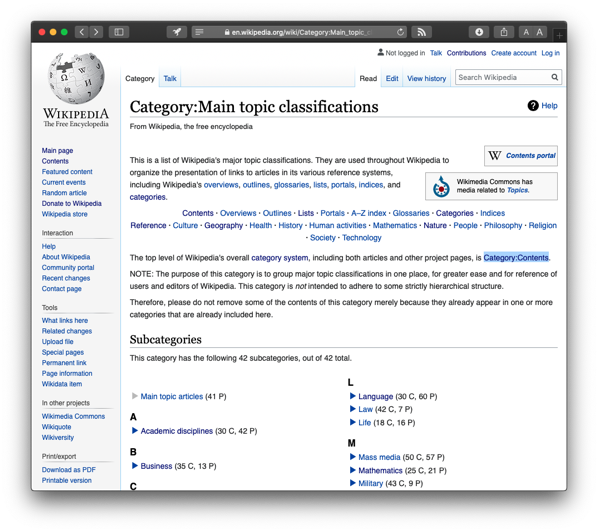

Feed Spider - Update 6

It took around 40 hours to expand and extract all of Wikipedia using WikiExtractor. In the end, I ended up with 5.6 million articles extracted. Wikipedia has 6 million articles, so WikiExtractor tossed out 400k of those. Possibly due to template recursion errors. That was something that WikiExtractor would occasionally complain about as it was working.

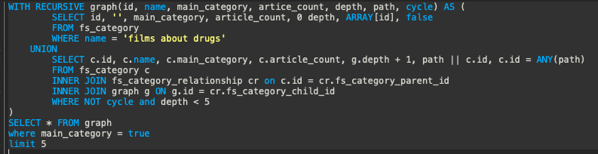

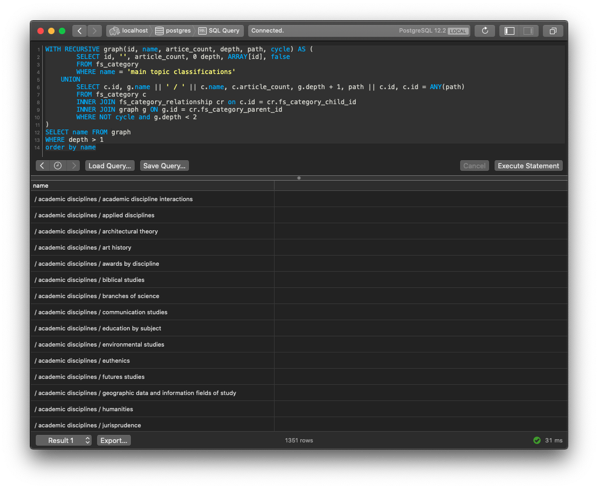

My next step was to fix that slow query that is used to roll up categories. I had no idea what I was going to do about it given the complexity of the query and amount of data that it was processing. Still, I thought I better do my due diligence and run an EXPLAIN against the query to tune it as much as I could.

I was surprised to see that the query was doing a full sequential scan of the relationship table. I thought that I had indexed its columns, but hadn’t. I only needed to have an index for one side of the relationship table, so I added it. I reran the query and it now consistently came back within 10’s of milliseconds as opposed to multiple seconds. This was a massive improvement.

Another change I made was that I went down another level in categories from the main content category. This netted about 10,000 categories that we would roll up into, versus the hundreds we had before. My hope was that this level would provide more useful categories for blogs.

I had to rewrite the Article Extractor now that it wasn’t going to be processing raw Wikipedia data any longer. Now it would be reading the JSON files generated by WikiExtractor. This would be much faster, especially since I got the roll up query fixed. Last time I ran the Article Extractor, it took all night long to extract only 68,000 records. This time I ran it and processed 5.6 million records in less than 2 hours. 💥

I was excited at this point and ran that output through the Article Cleaner to prepare it for training by fastText. That process is quick and only takes about ½ hour to run. Now for fastText training. I ran it with the same parameters as last time, just this time with a much, much larger dataset. fastText helpfully provides an ETA for completion. It was 4 hours, so I went to relax and have dinner.

After the model was built, I validated it and this time it only came out with 60% accuracy. That was a disappointment considering that it was 80% last time. Forging ahead, I ran the new model against a couple blogs. Testing against technology blogs gave varying and disappointing results.

The results for One Foot Tsunami are now more specific and more accurate. They still aren’t very useful. I decided I would try a simpler blog, a recipe blog, to see if that would improve results. This is the results for “Serious Eats: Recipes”.

At least it picked categories with “food” in the name a couple times. Still the accuracy is off and the categories not helpful. I need something that people would be looking for when trying to find a cooking or recipes blog.

I’m feeling pretty discouraged at this point. I think a part of me thought that throwing huge amounts of data at the problem would net much better results than I got. I have learned some things lately that I can try to improve the quality of the data. I’m not out of options and am far from giving up.

I think the next thing I will try though, is going down one more level in categories. Maybe the categories will get more useful. Maybe the accuracy will increase. Maybe it will get worse. I won’t know until I try.

CloudKit Extended Pauses

I’ve got something strange that happens with NetNewsWire’s Cloudkit integration. I consider the code stable at this point. I’ve been running it for weeks across 3 different devices and they never go out of sync.

My problem is that the CloudKit operations seem to pause for extended periods of time. This could be an hour, but then it will just break loose and start working again. Restarting the app also clears the problem up. I’d suspect a deadlock of some kind, but it will start back up again without intervention.

What is strange about this is that it only happens on macOS. iOS this never happens on. It seems to be worse if my system is under load or if I’ve left it NNW running for an extended period of time. It happens for both fetch and modify operations. I’m at a loss as to if this is a test environment issue, something with my machine, or a coding problem. Any one ever seen anything like this before?

Feed Spider - Update 5

My first run at classifying blogs ended predictably bad. Not horribly bad, I guess. If you squint really hard, you could see that some of the categories kind of make some sense. They just were generally not useful due to category vagueness. The categories that were found were things like “culture” or “humanities” which could be almost anything. Things are going to have to get more specific and more accurate.

One of the things I noticed when I was validating the categories and their relationships that I extracted was that some were missing. It turns out that Wikipedia will use templates sometimes for categories. A Wikipedia template is server-side include, if you know what that is. Basically is a way to put one page inside another page. I didn’t have a way to include template contents in a page while I was parsing it and was missing categories because of it.

I’ve started reading fastText Quick Start Guide and am about a ¼ of the way through it. I haven’t learned much about NLP, but I have gotten more tools to play with now. One of these is another Wikipedia extraction utility, WikiExtractor and it handles templates!

Something I always do when looking at a new Github project is check out open issues and pull requests. It tells you a lot about how well maintained the project is. One open pull request for WikiExtractor is “Extract page categories”. I’m glad I saw that pull request, because I didn’t know that it didn’t extract categories. Also, I now had the code to extract those categories. I grabbed the pull request version and got to work.

I did a couple test runs and realized that although I was getting full template expanded articles with categories, I wasn’t getting any category pages. The category pages are how I build the relationships between categories. WikiExtractor is about 3000 lines of Python, all in one file. After a couple hours of reading code, I was familiar enough with the program to modify it to only extract category pages and bypass article pages. I’ll extract the article pages later.

I wrote a new Category Extractor that took input from WikiExtractor and reloaded my categories database. Success! I now had the missing categories. Before, I had about 1 million categories. Now I have 1.8 million. Due to this change and fixing some other bugs, my category relationship count went up from 550,000 entries to 3.1 million. This is a lot higher fidelity information than I had loaded before.

The larger database makes a problem I had earlier even harder now. How to roll up categories into their higher level categories. This was a poor performer before and now that I will be extracting articles again and assigning them categories, I’m going to have to make it go faster. It ran so slow that I only had 68,000 articles to train my model with and I want to use a lot more than that next time.

That’s the next thing to work on. In the meantime, I’m running WikiExtractor against the full Wikipedia dump to give me template expanded articles. This is running much slower than when I just extracted the category pages and may take a couple days to complete. My poor laptop. If I have to extract those articles more than once using WikiExtractor, I’m going to set up a large Amazon On-Demand instance to run it on. Hopefully, it won’t come to that.

Feed Spider - Update 4

I put a test harness around the prediction engine for fastText. The test harness downloads and cleans an RSS feed and asks for the most likely classification. Here are some results from One Foot Tsunami:

Each row is an article title from the feed followed by the classification derived from the article content. I’m both encouraged and strangely disappointed at the same time. Things seem to be working, but clearly I need to do some work on what my categories are.

Initially, I tried combining all the articles in the feed and running that through the prediction engine. It always gave “chronology” back as the classification. Individual articles seem to give better results. I’ll probably end up classifying by article and taking the most common classifications as the feed’s.

I think “chronology” might be the default classification in the model. I see it come up a lot. Looking at the Wikipedia page for Category:Chronology has me thinking anything with a date in it will roll up to it. It looks like there will be trouble maker categories that I have to delete from the database, like “chronology”. I’ve already eliminated the ones with the word “by” in them. These were things like “Birds by state” which would clearly better be described by another classification.

I think I’ll probably fall into a cycle of tweaking the categories and then running the rest of the flow to see how well the predictions improve. That means making that slow category roll-up query run faster. I think I have my work cut out for me tomorrow.

Feed Spider - Update 3

Yesterday, I had just gotten the categories and category relationships loaded into the relational database and identified the categories I want to use for blogs. The next step was rolling up all the hundreds of thousands of categories into those roughly 1300 categories.

I came up with a query that I thought would work. This isn’t easy because Wikipedia’s categories aren’t strictly hierarchal. They kind of are, but it is really more of a graph than a hierarchy. What I mean by this is that a specific article can have multiple top level categories and there are many paths to get there. You can see this if you click on one of the categories at the bottom of a Wikipedia article. It will take you to a page about that category and at the bottom of that page is more categories that this one belongs to. Since there are more than one, the path to the top isn’t obvious and is plural.

One pitfall to walking a graph like this is getting stuck in a loop. For example category A points to category B, which points to category C, which points to category A. Another problem is getting back to too many top level categories. Finally, you have to deal with the sheer number of paths that can be taken. Say the category we are looking for has 5 parent categories and those have 5 each and those have 5 each. That’s 125 paths to search after only going up 3 levels.

In the end I put code in to limit recursion, limited to 5 resulting categories, and only 4 levels of searching upwards. The query still takes about 700ms to run which is very slow. That is not good.

When building a complex system it is important to address architectural risk as early as possible. I’m sure you have heard stories of projects getting cancelled after spending months or even years of development. Lots of times this is because a critical piece of the architecture proved unviable late in the project. Tragically a lot of work has usually been done that relied on that piece before discovering that it won’t work.

The biggest piece of architectural risk in this system is the machine learning parts. We want to get to that as quickly as possible so that we don’t end up doing work that may end up being thrown away. So I decided to move on to building the Article Extractor instead of optimizing the query or even trying to make it more accurate. We can come back to it later after risk has been addressed.

The Article Extractor being very similar to the Category Extractor didn’t take long to code and test. Its job is to read in the Wikipedia dumps for an article, assign the roll-up categories, and write it out to a file. Since it relied on the slow query for part of its logic, I knew it would be slow. So I fired up 9 instances of the Article Extractor and let it run for about 14 hours.

When I finally checked the output of the Article Extractor it had produced only 68,000 records. That isn’t very much, but should be enough for us to move on to the next step. We can go back and generate more data later if this doesn’t prove sufficient.

The next step is to prepare fastText to do some blog classifications by training the model. I don’t know much of anything about fastText yet. I’ve bought a book on it, but haven’t read it. To keep things moving along, I adapted their tutorial to work with the data produced thus far.

I wrote the Article Cleaner, see flowchart, as a shell script. It combines the multiple output files from the Article Extractor processes, runs it through some data normalization routines, and spits the result 80/20 into two separate files. The bigger file is used to train the model and the smaller to validate it.

Supervised training came next. I fed fastText some parameters that I don’t understand fully, but come from the tutorial, and validated the model. I was quite shocked when the whole thing ran in under 3 minutes. The fastText developers weren’t false when naming the project.